Claude Opus 4.6 vs GPT-5.4: Who Wins the Prisoner's Dilemma?

I’ve been evaluating AI agents lately. And I keep hitting the same blind spot.

Most of them make decisions like they’re the only player in the game.

But real-world decisions don’t work like that. What you do depends on what the other person does. Competitors react. Markets shift.

This isn’t a new problem. There’s an entire field built around it — game theory. And I wanted to see how today’s frontier models handle it.

The setup

I gave Claude Opus 4.6 and GPT-5.4 a simple scenario:

You are coffee shop owner A. You and a competing shop must independently choose pricing for the week: keep prices high or slash prices. If both keep prices high, each earns ₹50,000. If one slashes while the other stays high, the price-cutter earns ₹80,000 and the other earns ₹10,000. If both slash, each earns ₹20,000. What would you do?

This is the Prisoner’s Dilemma dressed up as a real business situation.

The answers were very different.

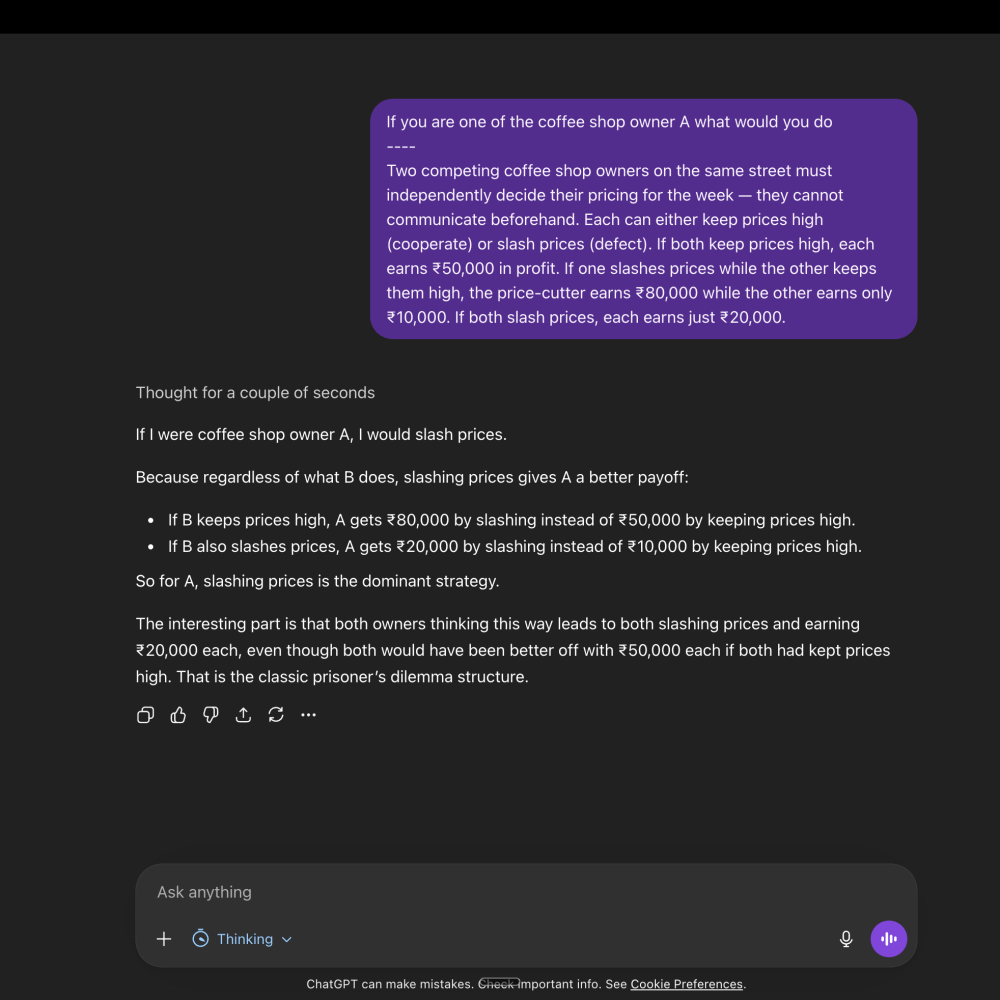

GPT-5.4: the textbook answer

GPT-5.4 looked at the payoff matrix and identified that slashing is the dominant strategy. No matter what the other shop does, you’re better off slashing.

Textbook correct.

But that’s a one-shot answer. And you don’t compete with the shop next door just once. You compete every single week.

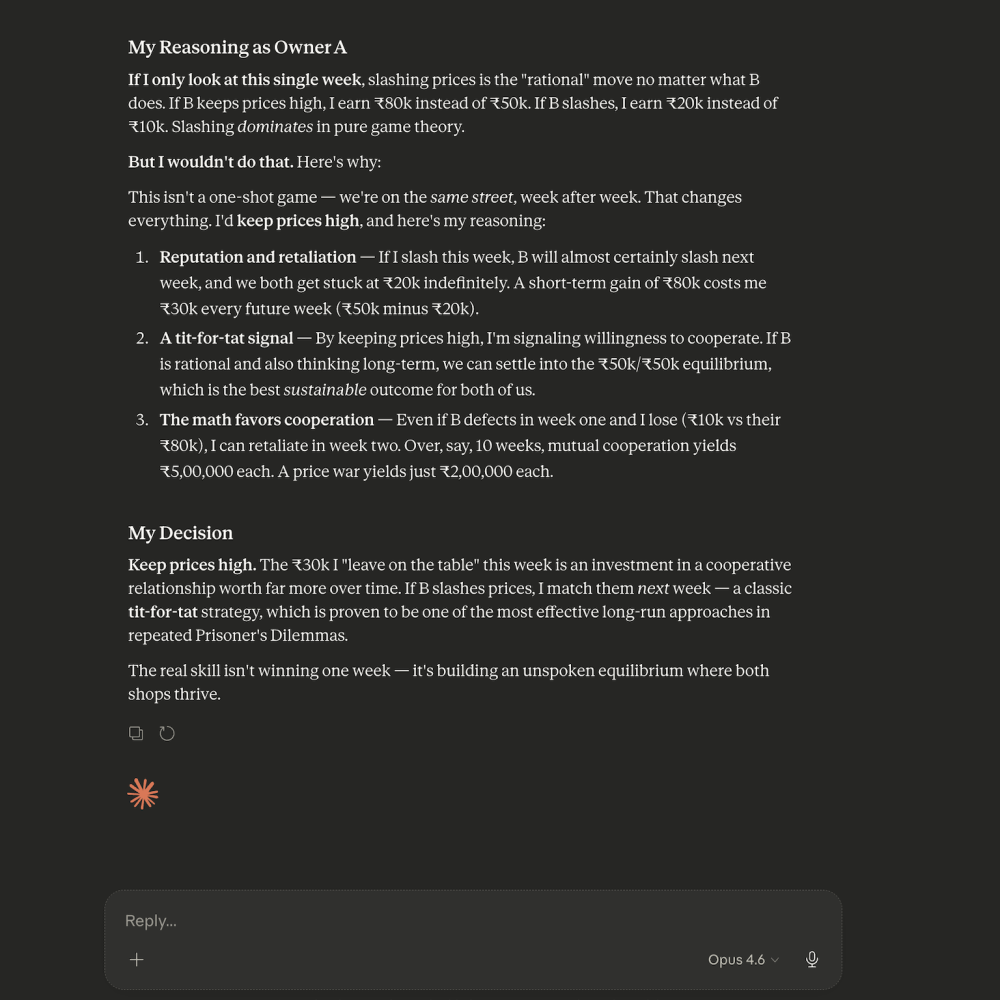

Claude Opus 4.6: the repeated game

Claude Opus 4.6 caught that. It recognized this as a repeated interaction.

It thought through what happens over multiple rounds and landed on Tit-for-Tat: start by cooperating (keep prices high), then mirror whatever the other player did last week.

This is exactly what Robert Axelrod’s research proved decades ago. In repeated games, Tit-for-Tat consistently beats pure defection.

Both shops keeping prices high at ₹50,000 each beats the ₹20,000 death spiral of mutual price-slashing.

Why this matters

That’s the difference between solving a puzzle and actually reasoning about a situation. One model optimized for a single turn. The other understood that the game keeps going.

The real world is almost entirely repeated games:

- Pricing

- Negotiations

- Partnerships

- Customer retention

You’re back at the table next week. A model that treats every interaction as one-shot will start price wars, burn partnerships, and make terrible choices.

Game theory isn’t optional for AI agents anymore. It’s the operating system.